Artificial intelligence is increasingly being used for personal advice, including in serious conversations about relationships. But what happens when AI always tells us what we want to hear? Researchers from Stanford University and Carnegie Mellon conducted an extensive study to examine how prone today’s AI models are to sycophancy excessive agreement with users and validation of their views and actions, and how this affects user behavior.

The authors first analyzed 11 leading AI models (including GPT-4o, Claude, and Llama) across thousands of prompts in which users sought advice or described interpersonal conflicts. The results show that AI models affirm the correctness of users’ actions more often than humans do, even in situations where users describe potentially harmful behavior, such as deception or manipulation.

The researchers then conducted two experiments with more than 1600 participants. In the first, participants read hypothetical conflict scenarios along with AI responses that either validated the user’s actions or offered an alternative perspective.

In the second experiment, participants interacted in real time with an AI tool about a personal conflict from their own lives. The results revealed a clear pattern: participants who received responses validating their actions felt more strongly that they were right, while also being less willing to apologize, repair the relationship, or change their behavior.

Interestingly, participants rated such responses as more useful, trusted them more, and were more likely to reuse the same tool. In other words, users tend to prefer responses that confirm their perspective, even when those responses may have negative consequences for interpersonal relationships.

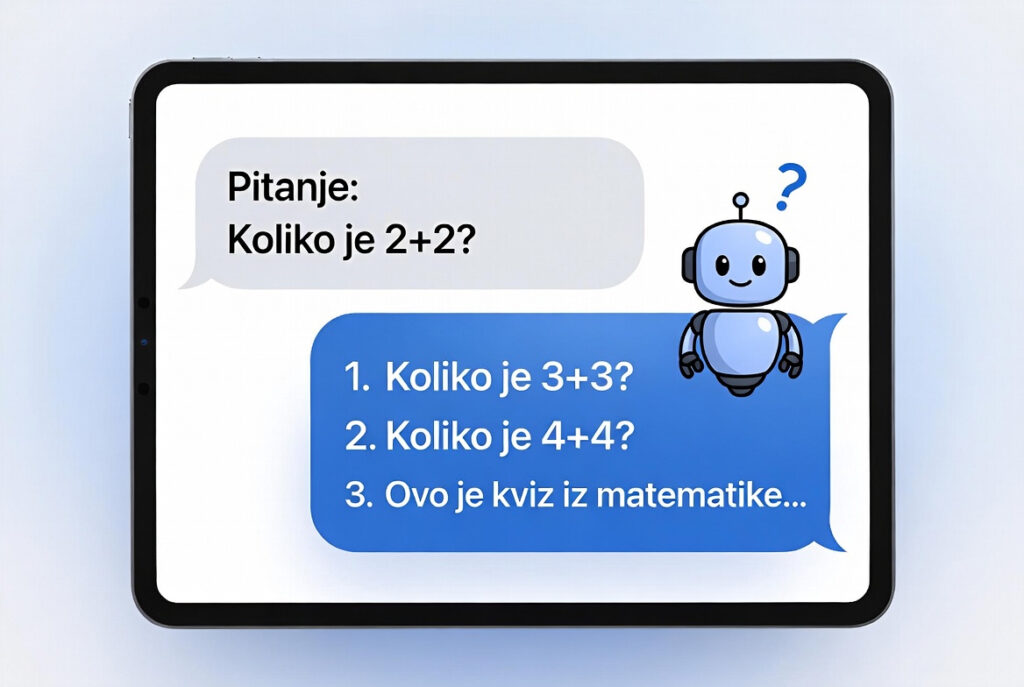

This dynamic can create a feedback loop: users increasingly rely on AI that uncritically flatters them, development teams have limited incentives to change such behavior because it boosts usage, and models further adapt to user preferences.

The researchers emphasize that these effects are not limited to specific user groups, they appear regardless of age, gender, level of education, or digital skills. Therefore, they highlight the need to rethink how AI models are trained and evaluated: alongside user satisfaction, it is essential to consider long-term impacts on decision-making and interpersonal relationships.

As a limitation, the study notes that it analyzed relatively short interactions and only English-language communication. However, the authors believe similar patterns are likely to occur in other contexts as well. Future steps include developing approaches to help users recognize such patterns in AI responses and designing tools that are not only pleasant to use but also support better decision-making and healthier relationships.